“Online lectures suck”. Who said that, and why?

“Online lectures suck”. Who said that, and why?

The comment was made by a Stanford student concerning his experience with the Coursera course CS229a: Applied Machine Learning. Ben Rudolph is an on-campus, fee-paying ($50,000 p.a.) student who took the online class as part of his studies. It’s thus not surprising that he wouldn’t be particularly enthralled by having to watch a series of short videos rather than attend lectures and mix with his peers.

I’ve had a look at the online lectures and they’re competent: not just ‘lecture theatre capture’, but purpose-designed online presentations that are a mix of ‘talking head’ explanation and voice-over screen capture presentations. I’ve seen worse (and better*).

But it was his other issues with the course that makes the case more concerning, especially the matter of ‘rigor’. In particular, Ben considers the assessment to be sub-standard, which should send alarm bells to all concerned.

Assessment in CS229a consisted of a series of review questions and a weekly programming assignment. As they were self-assessed, the review question were less problematic (in fact, no problem at all!). As Ben explains, they:

Assessment in CS229a consisted of a series of review questions and a weekly programming assignment. As they were self-assessed, the review question were less problematic (in fact, no problem at all!). As Ben explains, they:

“were simple from the beginning to the end. … sometimes it’s good to just refresh what you learned in the lectures, but the questions hardly ever asked anything that the lecture didn’t explicitly state. A little thinking would have made these more interesting.”

As for the programming assignments,

“…the level of difficulty dropped off drastically as the quarter progressed. At its worst, I completed a few programming assignments without even knowing that the corresponding lectures had been released (I have never done machine learning in the past). This is not a tribute to a stroke of brilliance I had, but rather how worthless the assignments became. I completed the program without even knowing what I was doing. The pdfs and comments associated with the programming assignments became so informative and gave so many hints that almost no critical thinking was needed.”

Ben’s solution is to separate the internal and external classes, and he makes some sensible points in justification of his pleas. But it still leaves open the question of the rigour of the assessment for the online learners. And we can sympathize with his conclusion:

“The initiative that Stanford has taken to open up education is great. However, God help me if all my classes become 2 hour weekly online lectures with review questions and auto-graded programming exercises. Stanford can expect a letter from me asking to get a cut in my tuition if the classes begin to go the way of CS229a.”

And just so this is clear, it is not a new problem; similar issues about assessment have abounded in education for yonks. This particular example reminded me of the Diploma of Education in which I was enrolled some decades ago. To be blunt, it was lousy (in modern parlance, it sucked!), being one of those useless graduate diplomas cobbled together to give terrified novice teachers a soothing credential. It did nothing to enhance my teaching of maths to motor mechanic apprentices at 8:30 on a Monday morning at Hobart Technical College.

Two subjects in particular are memorable. The first, ‘Philosophy of Education’, was delivered by a venerable Professor of Philosophy, shortly before his retirement. My memory of Prof. Hardy is his apparent fixation on Plato’s Republic. After a couple of weeks I stopped going to lectures, read Bertrand Russel’s History of Western Philosophy and managed to pass the exam.

The second subject was the ‘Psychology of Education’, this time presented by a young and enthusiastic lecturer. He was keeping up with new and emerging ideas in educational psychology, and his fixation at the time was the Keller Plan, a version of mastery learning.

The second subject was the ‘Psychology of Education’, this time presented by a young and enthusiastic lecturer. He was keeping up with new and emerging ideas in educational psychology, and his fixation at the time was the Keller Plan, a version of mastery learning.

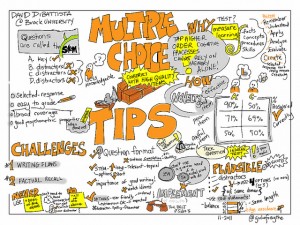

As applied by our erstwhile lecturer, there were no lectures; we were required to read sections of the set text and then take a series of multiple choice tests, for each of which we had to score almost perfectly (remember it’s about ‘mastery’) before we could proceed to the next one. We could take the same test (different version) as many times as we needed, without penalty. There was no exam.

Interesting? No, boring and futile. Once under way, I quickly realized that all I need do was to scan the relevant reading shortly before taking the test and rely on short-term memory to identify the correct answers. The strategy worked just fine and I passed (it was a ‘pass only’ subject), but learned/remembered little or nothing about educational psychology. And its effect on the quality of my teaching? Zilch. But I wasn’t paying $50,000 per year for my education, so laughed it off.

So yes, Ben, online lectures (can) suck, but as you rightly realize, that’s not the problem. It’s the quality of the assessment that provides a demonstrable measure of how much and how well you’ve learned. It can and should also be an integral part of learning, not just a test of learning.

* Better online teaching? The example on which I’ve constantly called for the past few years is the excellent online physics materials produced at the University of New South Wales: PhysClips. If you want to see what can be done by a group of talented and creative physics academics, browse the site; it’s full of great ideas and applications. To my mind, this is how it should be done!

photo credit: doctorious via photopin cc

photo credit: giulia.forsythe via photopin cc

photo credit: giulia.forsythe via photopin cc